Overview

TrueLearn is committed to providing a world-class product that prepares students to become strong life-long learners inside and outside of the classroom. A key part of our data-centric approach is to communicate comparative benchmarks for students to gauge their preparedness.

The TrueLearn Comprehensive Exam Readiness Prediction Model provides an estimated probability of a student passing the real-world standardized exam (COMLEX-USA® Level 1) based on performance on the mock assessment combined with longitudinal study behaviors. It is designed as a decision-support tool to help educators, students, and advisors assess exam readiness. However, the exam readiness predicted probability should not be interpreted as a guarantee of success or failure, but rather as an indicator of likelihood based on historical test-taking patterns and behavioral forensics.

1. How the Exam Readiness Prediction Model Works: Learning Behavior

Research shows that a single Mock Exam score (in our system the Percent Correct and Assessment Based Probability tiles) provide a snapshot in time, and are most accurate the closer to the actual exam as possible. We have found that behavioral cues allow for a much earlier exam readiness prediction, which allows for earlier and targeted remediations.

Our exam readiness prediction engine utilizes over 144 distinct variables. While the Mock Assessment score is a significant factor, the model also analyzes “Behavioral Forensics” on the platform—the how and why behind your performance. This approach helps identify students who may have high knowledge but high risk (e.g., due to panic or inconsistency), and students with lower Mock scores who possess the resilience to pass.

The model analyzes data across 9 Key Dimensions:

- Performance Level & Accuracy Trends

- What it tracks: A unified view of how well a learner performs across the study timeline—both early and late—and how they handle questions of varying difficulty.

- Why it matters: It distinguishes between a student who is improving versus one who is plateauing.

- Study Behavior & Response Patterns

- What it tracks: How learners interact with the interface during questions.

- Why it matters: Time-to-answer and pacing are strong indicators of confidence and mastery.

- Study Cadence & Scheduling Habits

- What it tracks: The regularity of study sessions.

- Why it matters: Consistency strongly correlates with successful learning and retention.

- Overall Activity & Engagement

- What it tracks: A big-picture look at volume and commitment.

- Why it matters: Higher engagement typically leads to deeper knowledge application.

- Difficulty-Based Strengths & Weaknesses

- What it tracks: Performance segmentation by item difficulty.

- Why it matters: Getting “Easy” questions wrong is a higher risk signal than getting “Hard” questions wrong.

- Peer & Cohort Comparison

- What it tracks: Benchmarks learners against similar students at their institution.

- Why it matters: Provides context for individual performance relative to the specific curriculum of the institution.

- Momentum & Recency Effects

- What it tracks: Whether the learner is trending upward or downward near the exam date.

- Why it matters: Momentum is one of the strongest predictors of real exam outcomes.

- Study Mode & Contextual Factors

- What it tracks: The environment in which questions were answered.

- Why it matters: “Timed” are more predictive of board reality than “untimed tutor” mode.

- Composite & Interaction Metrics

- What it tracks: Complex combinations of the above signals.

- Why it matters: These metrics detect psychological states like “Test Anxiety” or “Fatigue.”

2. Performance & Validation

By moving from a simple “Score-to-Pass-Rate” conversion to this multi-variable Behavioral Model, predictive accuracy has increased significantly.

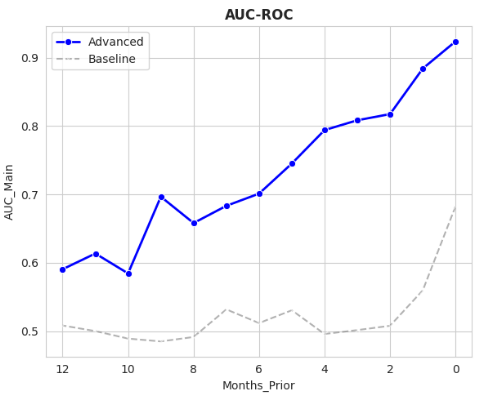

- Earlier Indication: Results demonstrate that the Comprehensive Exam Readiness Model is not only more accurate predicting real outcomes, but also provides value earlier in the academic year. While both models are more accurate closer to the actual exam, the following chart illustrates the predictive value up to 12 months prior to the exam.

Figure 1. Area Under the Curve (AUC) scores for both Comprehensive Exam Readiness Model (Advanced) and Assessment Based Probability of passing (Baseline). Month 0 indicates actual test dates.

- Higher Precision: The model significantly improves the detection of “at-risk” students who might otherwise fly under the radar with passing Mock scores.

- Holistic Scoring: The model aligns Pass/Fail probabilities with Score predictions, eliminating contradictory results (e.g., where a student is predicted to Pass but given a failing score).

- Data Foundation: The model is trained on thousands of student outcomes, utilizing 10x more data than previous iterations.

To ensure this prediction engine is reliable, we didn’t just tweak the math; we completely overhauled the data foundation and the inputs used to make decisions.

The “10x” Data Advantage

While the original Assessment Based Prediction model relied on a smaller historical dataset of approximately 460 students from the pre-2022 era, the new Comprehensive Exam Readiness model is trained on a massive, expanded dataset of over 4,000 students, encompassing both the historical ‘Scored’ era and the modern ‘Pass/Fail’ era. By increasing the volume of training data by nearly an order of magnitude, the system can now recognize behavioral patterns that were previously invisible.

Feature Engineering Shift: From 1 to 144 Input Variables

The most significant change is what the model looks at.

- While the Assessment Based pass probability relies on a limited number of variables associated with the current assessment (see here), the Comprehensive Exam Readiness model analyzes 144 longitudinal behavioral variables. Instead of just looking at a score, it breaks down your entire performance trajectory into 73 raw features and various aggregates indicative of study behavior, including:

- Psychological Features: Detecting “Panic” (rushed streaks), “Doubt” (changing right answers to wrong), and “Resilience” (accuracy immediately after making an error).

- Temporal Patterns: Analyzing “Circadian” rhythms (time-of-day performance, such as late-night versus early-morning studying) and seasonal consistency.

- Cadence & Pacing: Measuring sustainable study streaks versus “Cramming Ratios” (unsustainable volume spikes before an exam).

- Endurance & Fatigue Tracking: Measuring your “Stamina” on complex, time-consuming questions and calculating “Fatigue Drops” (how much your accuracy decays from the start to the end of a long Mock Exam).

- Learning Momentum: Calculating your “Improvement Slope” over the academic year and applying “Recency Weighting” so your current mastery counts more than your early-year struggles.

- Simulation Environment (The “Truth Serum”): Comparing your performance in relaxed, un-timed study sessions against your accuracy in high-pressure, Mock exams to identify hidden test anxiety.

- Knowledge Depth & Vulnerability: Analyzing the “Knowledge Gap” between your accuracy on easy, foundational questions versus your performance on the hardest, highest-yield items on the platform.

- Learner Archetypes: Identifying specific behavioral profiles, such as the “Grinder” (very high question volume with a flat learning curve), to separate busy work from actual knowledge retention.

Architecture Shift: From Linear to Nonlinear

- Assessment Based Probability: Used Logistic Regression & Linear Regression. It assumed study habits had a simple, straight-line relationship with scores (e.g., “More study always equals Higher score”) and treats Pass/Fail and Scoring as separate, disconnected tasks.

- Comprehensive Exam Readiness Model: Uses Nonlinear Classification & Nonlinear Regression. This technology builds “decision trees” that can capture complex, non-linear behaviors. For example, it understands that “high speed” is good up to a point, but becomes “panic” (and bad) if it exceeds a certain threshold. It also uses a hierarchical structure where the score depends on the Pass/Fail classification, ensuring consistency.

COMLEX Level 1 Exam Readiness Prediction Model Performance

The Comprehensive Exam Readiness model demonstrates a substantial leap in accuracy compared to the Assessment Based baseline:

- Ability to Rank Students (AUC): Improved from 0.69 to 0.93.

- Translation: The model is far better at correctly rank ordering students from “Most Likely to Pass” to “Most Likely to Fail.”

- Pass/Fail Classification: The new model provides a much more precise and reliable binary Pass/Fail prediction. By leveraging behavioral forensics, it drastically reduces inconsistencies and ensures that at-risk students are accurately identified earlier in their study timeline.

3. Insights

In the Student Summary tiles, we surface the major behavioral factors that contributed to a student’s predicted score. These insights are driven by SHAP (Shapley Additive Explanations) values, a statistical method that calculates exactly how much each of the 144 underlying variables influenced a specific prediction.

By computing this for every individual student, the platform provides actionable feedback so students can understand current strengths and identify exactly which study behaviors to adjust for a better outcome.

Table 1 defines the most common behavioral insights you will see highlighted in your reports.

| Insight Term | Definition |

| Easy-Question Accuracy | How well you answer the most straightforward, widely-understood exam questions. Specifically tracks overall accuracy on the top 33.33% easiest questions (based on global difficulty). |

| First-Attempt Accuracy | How often you answer a question correctly the very first time you see it. This measures true baseline knowledge before any re-testing or memorization occurs. |

| Difficulty Accuracy Gap | The difference in accuracy between the easiest and hardest questions. Helps distinguish between careless errors and genuine, high-complexity knowledge gaps. |

| Recent Consistency | Your accuracy and study engagement maintained steadily over time. Calculated using the inverse standard deviation of daily question volume and days between assessments, weighted by the log of your total question volume. |

| Recent Accuracy Trend | Your pattern of getting questions right, weighted heavily toward your most recent performance. Uses an exponentially-weighted half-life (e.g., 7, 14, 40 or 80 days) to evaluate short-term memory momentum versus long-term retention. |

| Recent Study Volume | How actively you have been answering questions. Specifically tracks the total count of questions answered in the Mock Assessment and the 7- to 14-day windows immediately prior to the cutoff date. |

| Late-Cycle Activity | The proportion of your total practice completed specifically in the last 3 days of study. Used to track cramming (unsustainable volume spikes). |

| Early-Morning Study Habits | How often and effectively you study between 5 AM and 9 AM. Tracks both the raw question count and the percentage of overall practice completed during this window. |

| Late-Night Study Sessions | The proportion of your total practice done during late-night hours. Tracks both the raw question count and the percentage of overall practice completed between 10 PM and 5 AM. |

| Post-Mistake Resilience | How well you maintain performance immediately after getting a question wrong. Measures “tilting” by calculating the drop between your overall baseline accuracy and your post-mistake accuracy. |

| Second-Guessing Rate | How often you change a correct answer to a wrong one under doubt. Tracks the exact rate at which a student switches from a correct selection to an incorrect one. |

| Time-on-Wrong-Answers | How long you engage with difficult questions you miss, indicating analytical effort. Measures the average time spent (in seconds) on questions that were answered incorrectly. |

Table 1: Insight Definitions & Variable Mapping

How to Improve: Actionable Strategies for Top Predictors

From our analysis of over 4,000 students, certain behavioral factors consistently rank as the top predictors of exam outcomes. If your SHAP insights highlight any of the following areas as a risk or negative driver, here are the immediate actions you should take to course-correct:

- If you are flagged for Easy-Question Accuracy:

- The Action: Stop focusing on low-yield edge cases or highly complex specialty questions. Your primary risk is a gap in core medical concepts. Prioritize reviewing “must-know,” universally tested foundational material immediately.

- If you are flagged for First-Attempt Accuracy:

- The Action: Focus on active recall and initial learning strategies. Avoid the trap of simply re-doing familiar questions you’ve already seen just to artificially inflate your overall average.

- If you are flagged for low “Simulation” Mode Usage:

- The Action: Transition away from “Tutor” mode. The model expects you to be practicing under test-day conditions. Increase the volume of strict, timed practice blocks to build your pacing and readiness.

- If you are flagged for Stamina Drops:

- The Action: You are experiencing cognitive fatigue on complex questions. Build your endurance by taking longer, uninterrupted practice blocks (e.g., 40+ questions at a time) without taking breaks or pausing the timer.

- If you are flagged for Recency / Consistency (7, 14, 40, & 80 Days):

- The Action: Stop cramming.

- If your short-term (7- or 14-day) metrics are flagging, you need to stabilize your daily study routine immediately.

- If your long-term (80-day) metrics are flagging, you are forgetting older material and need to incorporate spaced repetition to keep historical topics fresh.

- The Action: Stop cramming.

- If you are flagged for Mock Assessment Accuracy (Easy vs. Hard):

- The Action: Review your mock exams forensically to identify the root cause. Distinguish between careless errors on easy items (which requires pacing and anxiety fixes) versus genuine knowledge gaps on hard items (which requires deeper content review).

4. Interpreting the Data: Real-World Scenarios

A common point of confusion is why two students with similar Assessment Based Prediction can have different Comprehensive Exam Readiness Score . This is the “Behavioral Forensics” engine at work.

While the Assessment Based score tells us what you know, specifically how many questions you’ve answered correctly or incorrectly, how much time it took, etc., your Comprehensive Exam Readiness score includes those performance factors in addition to behavioral patterns (such as Recent Consistency, Late-Cycle Activity, Post-Mistake Resilience, and Second-Guessing Rate), which tells us how study behaviors themselves contribute to a student’s score. This, combined with topic level performance, creates a unique ecosystem for understanding exam performance.

The following examples highlight real, anonymized student outcomes to demonstrate how the new model captures risks that raw performance metrics may overlook.

Type 1: The “False Confidence” Risk

This is the most critical scenario: A student who believes they are safe due to a moderate average on the assessment, but has underlying behavioral risks (e.g., poor Recent Consistency, high Late-Cycle Activity).

- Student Profile: A student with volatile study habits struggling with foundational concepts (low Easy-Question Accuracy).

- The Reality (Actual Board Outcome): FAIL

- Assessment Based Prediction: 60%, PASS

- The Assessment Based prediction looked at a generic average and gave a “safe” prediction, giving the student a false sense of security.

- Comprehensive Exam Readiness Prediction: 36%, FAIL

- The Comprehensive Exam Readiness model correctly identified the risk factors—likely spotting high Late-Cycle Activity (cramming) or poor Post-Mistake Resilience—and issued a warning. This is the “Check Engine Light” working as intended.

Type 2: The “Borderline” Student

This scenario highlights precision. For students on the borderline, a generic prediction is less accurate. The Comprehensive Exam Readiness model detects the specific drag factors (like a high Second-Guessing Rate or poor Time-on-Wrong-Answers) that pull a student below the line.

- Student Profile: A student in the critical pass/fail “borderline” zone.

- The Reality (Actual Board Outcome): FAIL

- Assessment Based Prediction:57%, PASS

- The predicted probability was too high, completely missing the failure risk. The student did well on this Mock Assessment, but longitudinal behavioral factors are not included.

- Comprehensive Exam Readiness Model Prediction:48%, FAIL

- This model prediction was close to the actual outcome. This precision gives “borderline” students the realistic data they need to consider delaying their exam or seeking aggressive remediation.

Type 3: The “High Performer” Student

The model is not just for at-risk students. It also better rewards high-performing behaviors.

- Student Profile: A strong student seeking confirmation of high mastery to build a solid foundation for Level 2 .

- The Reality (Actual Board Outcome): PASS

- Assessment Based Prediction: 57%, PASS

- Comprehensive Exam Readiness Prediction:76%, PASS

- The Assessment Based model tends to pull everyone toward the average. The Exam Readiness model recognizes strong Recent Consistency and First-Attempt Accuracy and awards a higher prediction that reflects true potential.

Interpretation Guide: How to Read Student Results

When you look at a student’s prediction, keep these archetypes in mind:

- If the Comprehensive Exam Readiness prediction is lower than the Assessment Based Prediction: The model is likely saving the student from the “Type 1” “False Confidence” scenario. It detects a behavioral risk (such as a large Difficulty Accuracy Gap or low Post-Mistake Resilience) that their raw accuracy is hiding. Treat this as a prompt to advise students to fix their habits and test-taking endurance, not just their knowledge.

- If the student’s prediction is a “Fail” or “Low Probability”: Do not ignore this. As seen in “Type 2, The “Borderline” Student scenario” the model is accurate at identifying failing performance even when previous models (or intuition) might suggest a Pass. Review flags like Recent Accuracy Trend and Late-Cycle Activity immediately. Please refer to Table 1 for detailed descriptions.

- If two students have the same Assessment Based pass probability but different Comprehensive pass probabilities:

- Student A (Lower Probability): Likely displays “anxious” or fatigued behaviors, such as high Late-Cycle Activity (frantic cramming binges), a severe Difficulty Accuracy Gap on complex questions, or rushing blindly (poor Time-on-Wrong-Answers).

- Student B (Higher Probability): Likely displays resilient and consistent behaviors, such as high Post-Mistake Resilience (effectively rebounding from mistakes), strong Recent Consistency (long-term spaced repetition), and strong First-Attempt Accuracy (true baseline knowledge).